(Each GPU card has:

5120 CUDA® cores)

- Deep Learning

- AI Training

- AI Inference

- HPC

- The Netherlands

-

Ubuntu 22.04 Server

-

€ 2179.2 / month

-

€ 544.8 / week

-

€ 108.97 / day

-

€ 0.13 / minute

-

€ 0 - setup fee

(all prices without VAT)

For 1 month you will pay: 2179.2 Euro

Available on 30 May 2026, 00:00 CET

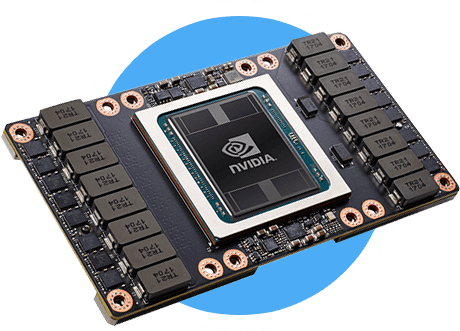

GPU NVIDIA® Tesla® V100 - the most efficient GPU, based on the architecture of NVIDIA® Volta. NVIDIA® Tesla® V100 accelerators, connected by NVLink™ technology, provide a capacity of 160 Gb/s, which allows a whole host of problems to be solved, from rendering and HPC to training of AI algorithms.

Tesla V 100 GPU's are great for:

-

Deep Learning

-

Rendering

-

High-performance computing (HPC)

- GPU: Volta GV100 architecture

- Memory: 16 GB HBM2

- NVIDIA CUDA cores: 5120

- Memory Bandwidth: 900GB GB/s